In a recent blog post I presented a research use and technique framework. Today’s blog focuses on two of the applied research uses—strategic marketing management and marketing performance. Strategic marketing management involves getting the big picture by understanding opportunities, problems, and potential targets. Marketing performance is focused on assessing performance by monitoring and analyzing what is happening in market.

Susan Frede

As Vice President of Research Methods and Best Practices at Lightspeed from 2010-2017, Susan designed, conducted and analyzed numerous research-on-research projects to improve panel performance, respondent quality and survey data quality. Bringing nearly 23 years of Market Research experience, she published numerous research-on-research papers and is a well-respected speaker at key industry events. Susan worked closely with clients on quality initiatives and survey integrity, offering insight and consultation on research design, applications, execution and delivery. In addition, Susan played a key role in training and development within Lightspeed as well as the larger Kantar organization.

Before joining Lightspeed, Susan worked for 11 years for Kantar’s TNS, specializing in research for the Consumer Packaged Goods marketplace. She had also worked at Convergys, Parker, and Nielsen in various analytic and statistical roles.

Her statistical experience includes CHAID, Conjoint & Discrete Choice, Cluster Analysis, Correlation Analysis, Coverage/TURF Analysis, Discriminant Analysis, Factor Analysis, Forecasting, Multidimensional Scaling, Perceptual Mapping, Regression and Structural Equation Modeling. She also has extensive experience with multiple research techniques including Awareness & Usage , Brand Equity/Loyalty, Claims Testing, Concept and Product Testing, Customer Satisfaction/Value, Employee Satisfaction, Habits and Practices, Line Optimization, Package Testing and Segmentation.

Susan has provided expert consultation to clients in industries including Automotive, Durable Goods, Financial, Food & Beverage, Health & Beauty Care, Household Products, Insurance, Pharmaceutical, Retail, Technology and Telecommunications.

Susan is currently working towards her master’s degree in adult education from the University of Georgia. She completed her undergraduate work at Northern Kentucky University where she graduated Magna Cum Laude with a B.S. degree in Marketing and a Minor in Mathematics. She was awarded a Certificate of Excellence from the Management and Marketing Department. Her post-graduate education and training includes programs in Principles of Marketing Research, Practical Conjoint Analysis & Discrete Choice Modeling, Practical Multivariate Analysis and Tools & Techniques of Data Analysis.

Recent Posts

Research Techniques for Strategic Marketing Management and Marketing Performance

Topics: Marketing Research

Teaching marketing research has given me the opportunity to connect with people who could be future leaders in the marketing research industry, which I find to be an exciting extension of my ‘day job’ heading-up research methods and best practices for Lightspeed. I am currently teaching Consumer Insights at Northern Kentucky University. Teaching undergrads marketing research has made me reevaluate how we in the industry talk about various topics and try to come up with simple ways to explain what we do. One of my first challenges was coming up with a framework that summarizes the uses of marketing research and the specific research techniques tied to each use. I was thinking this should be simple; however, I quickly realized I couldn’t find what I wanted, so I created my own framework.

Topics: Marketing Research, marketing research best practices

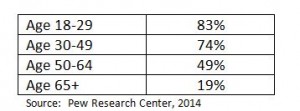

According to a report released by the Boston Consulting Group, millennials will outnumber baby boomers 78 million to 56 million by 2030, and they are starting to form brand and shopping preferences that will likely stick with them for a lifetime. Marketers have to evolve and be much more interactive to attract and retain millennial consumers. Gone are the days of commercials and print media ads; millennials drive and demand a two-way, reciprocal marketing approach. Brands of all sizes try to connect with millennials to understand what drives their attitudes and behaviors, but unfortunately millennial voices are often underrepresented within typical marketing research forums.

Topics: Marketing Research, Millennials, marketing research trends

Sampling Best Practice: Probability versus Nonprobability, Redux

A dozen years ago a debate raged in the marketing research community over the switch from probability sampling methods such as telephone RDD to nonprobability sampling methods as are typical with online access panels. In the interim years, most clients moved to online samples but there are still some that cling to probability methods. However, we now see the quality of probability samples being questioned because of low response rates for RDD. In an interesting twist, the very same techniques that nonprobability samples use to weight and model data now often need to be done on probability samples to account for nonresponse bias.

Topics: Online Sampling, marketing research best practices, nonprobabliity samples, probability samples

Research has consistently shown that all panels are not the same. Recruitment sources and management practices vary, and this can cause differences among panels. Beyond panels, there are other sources of online survey respondents, such as river, dynamic, and social media sources – and these can produce data that is different from each other, as well as different from panels. Given the wide variety of sample sources, and their benefits and drawbacks in cost and quality, researchers often struggle with the question, “How can I blend in other sources without impacting my data?”

Topics: Online Sampling, Marketing Research

Do you think like a respondent?

Poor quality survey design leads to low completion rates, high dropout rates, speeding, suspicious behavior, panel attrition and higher sample costs. Ultimately poor design can lead to bad business decisions. Mobile may finally force better survey design and better-written questions.

Topics: Respondent experience, Market Research, research on research, questionnaire design

Transitioning to Online: Differences in Marketing Research Data

Everyone hates data transitions, but sometimes they are necessary. In most of the world, marketing research has undergone the transition to online from either telephone or face to face. When these transitions happen, we typically experience data differences, some of which can be measured, calibrated and explained while in other situations we are less able to explain the root cause.

Topics: Marketing Research Data, Emerging Markets

There is always debate on what makes a great researcher. Many focus on more traditional research skills such as questionnaire design, data analysis, and presentation skills. However, I think what truly turns a good researcher into a great researcher are critical thinking skills.

Topics: Research Skills

On a monthly basis, the Cincinnati AMA Marketing Research Shared Interest Group meets to discuss industry issues, trends, techniques and methodologies. During the January 2015 meeting, we debated the group’s burning research questions.

Topics: Market Research Trends

According to change management theory (Burke, 2014) the nature of change can be either evolutionary or revolutionary. Evolutionary change consists of incremental changes and doesn’t necessarily change the whole structure or system. Revolutionary change is more radical and is often described as a jolt to the entire system.

Topics: Mobile